A video montage of 640 selfie images from London. The individual images are identically aligned with respect to eye position and sorted by the head tilt angle. The blending animation is designed to create abstractions of the individual images, but still maintains a degree of fidelity with respect to image details and context.

selfiecity London

Investigating the style of self-portraits (selfies) in six cities across the world using a mix of theoretical, artistic and quantitative methods.

This is a special edition of the original selfiecity project for the Big Bang Data exhibition, Somerset House, London.

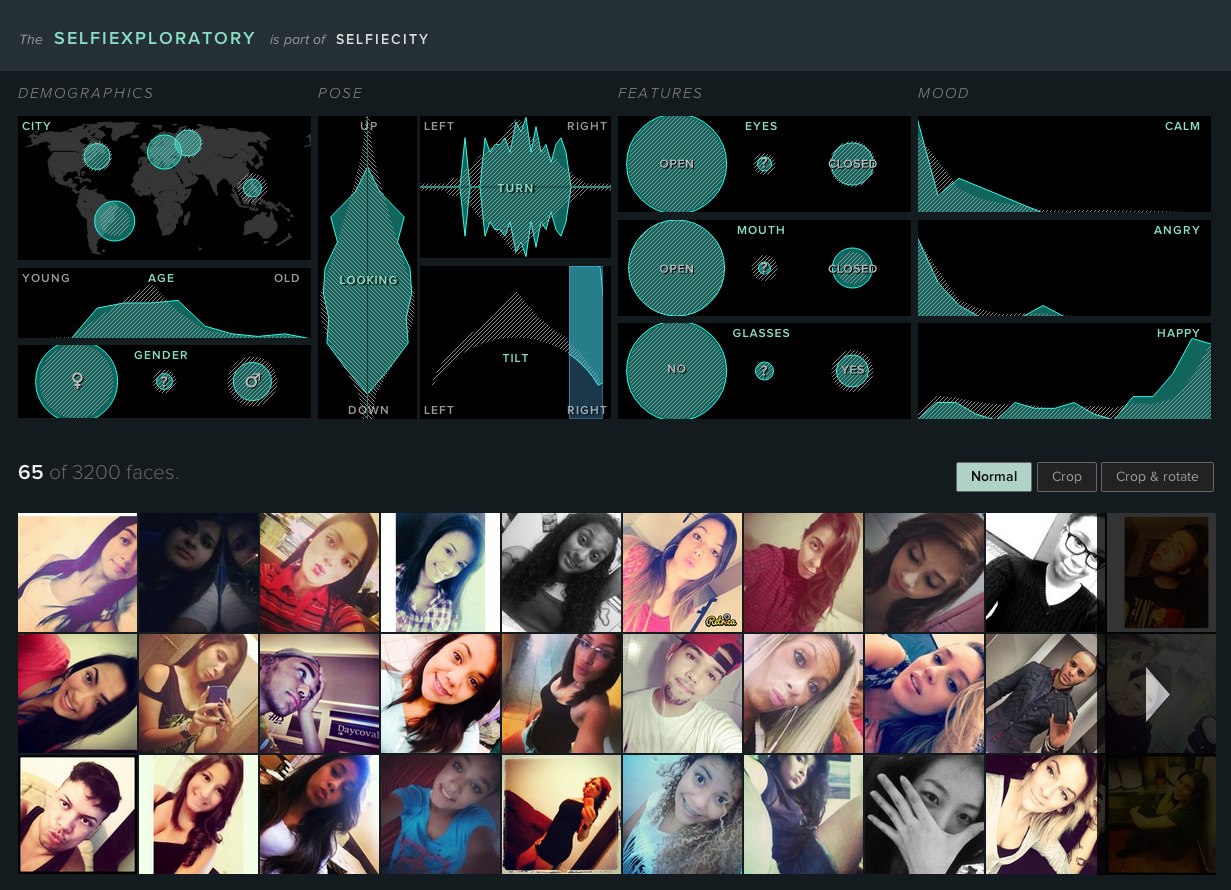

We collected and analyzed 152,462 Instagram images from central London for the week of September 21–27, 2015 and compared the results to our findings from five other cities. Experiment with the new data in the selfiexploratory, and scroll down for an overview of the London findings.

Demographics

First, here is what we found about how London selfies compare to other cities in terms of demographics, using a mixture of automatic methods and human judgments:

- The estimated average age of male selfies in London (28.0 years) is higher than the average for the other cities (26.3 years).

- The estimated average age of female selfies in London is very similar to other cities (23.7 years vs. 23.6 years).

- In London we found 62.8% female selfies (versus 37.2% male selfies). To compare, here are the proportions of female selfies in other cities we found: 55.2% in Bangkok, 59.4% in Berlin, 61.6% in New York, 65.4% in Sao Paolo, 82.0% in Moscow. London female to male selfies ratio is the second highest after Moscow.